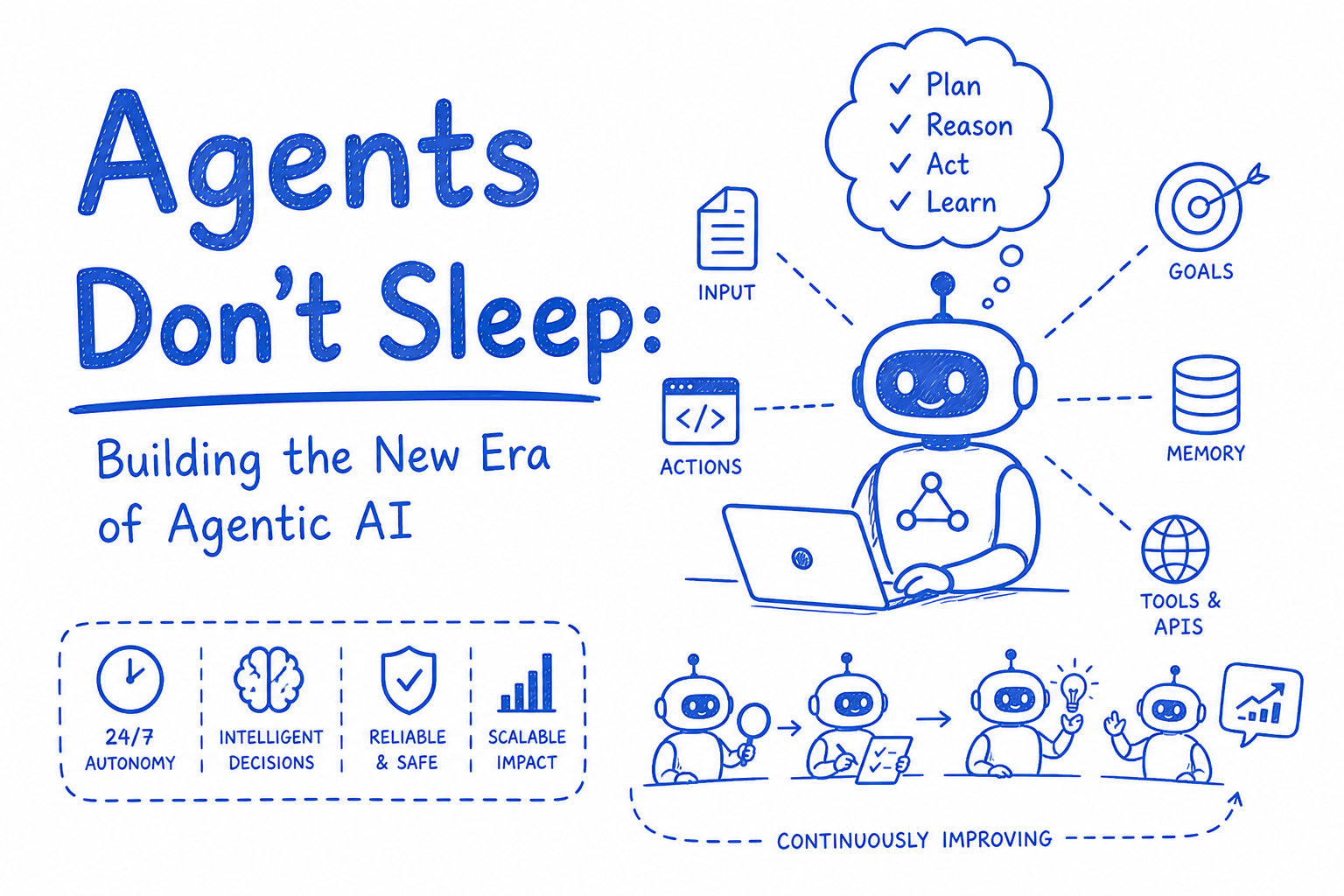

Agents Don't Sleep: Building the New Era of Agentic AI

LLMs that just answer questions are yesterday's news. Agentic AI acts, plans, and executes — here's what that actually means and how to build it.

Zep Admin

May 3, 2026

Here's the cleaned version:

Title: Agents Don't Sleep: Building the New Era of Agentic AI

Description: LLMs that just answer questions are yesterday's news. Agentic AI acts, plans, and executes. Here's what that actually means and how to build it.

Content:

For two years, we've been impressed by AI that talks. Now we're building AI that does.

Agentic AI isn't a chatbot with a better prompt. It's a system that perceives a goal, breaks it into steps, uses tools, handles failures, and keeps going without a human in the loop for every decision. It's the difference between asking someone "how do I book a flight?" and handing them your laptop.

What makes an AI system "agentic"?

Four things separate an agent from a glorified autocomplete:

- Planning: breaking a complex goal into subtasks autonomously

- Tool use: calling APIs, running code, browsing the web, reading files

- Memory: retaining context across steps (short-term) and sessions (long-term)

- Self-correction: detecting when something went wrong and trying a different approach

If your AI can only respond, it's a chatbot. If it can act, retry, and adapt, it's an agent.

The 4 agent architectures you need to know

1. ReAct (Reason + Act) The agent reasons about what to do, takes an action, observes the result, then reasons again. Simple, debuggable, widely used. Most production agents start here.

2. Plan-and-Execute The agent generates a full plan upfront, then executes each step. Better for long-horizon tasks. Worse when early steps fail and the plan becomes invalid.

3. Multi-agent systems Multiple specialized agents coordinating: one searches, one writes, one verifies. Powerful but complex. Failures cascade. Observability becomes critical.

4. Human-in-the-loop (HITL) The agent runs autonomously but pauses at high-stakes decisions for human approval. The most production-safe pattern in 2025. Not a limitation, a feature.

What actually goes wrong (and how to handle it)

Agents fail in ways that chatbots never do:

- Tool call loops: the agent keeps retrying a failed action forever. Fix: max retries + fallback behavior.

- Context blowout: long task chains exhaust the context window. Fix: summarization checkpoints.

- Hallucinated tool calls: the agent invokes a tool with made-up parameters. Fix: strict output schemas + validation.

- Goal drift: the agent solves a subtask so well it forgets the original objective. Fix: anchor the goal in every system prompt turn.

Every agentic system needs a circuit breaker. Agents that can't fail gracefully will fail catastrophically.

The stack in 2025

- Orchestration: LangGraph, CrewAI, AutoGen, or raw API loops

- Tool calling: OpenAI function calling, Anthropic tool use, MCP (Model Context Protocol)

- Memory: mem0, Zep, pgvector for long-term; in-context for short-term

- Observability: Langfuse, LangSmith, Arize. Non-negotiable for agents.

- Sandboxing: E2B, Modal, or Docker for agents that run code

Where agentic AI is actually shipping

Coding agents (Devin, Claude Code, Cursor), research agents that synthesize 50 sources into a report, sales agents that qualify leads and draft outreach, DevOps agents that triage alerts and open PRs, and customer support agents that resolve tickets end-to-end without escalation.

This isn't demos anymore. These are in production, handling real workloads, making real decisions.

The question is no longer "can AI do this task?" It's "how much autonomy are you comfortable giving it, and what does your fallback look like when it gets it wrong?"